The Smart Doll With Emotion Recognition has two scenarios.

In the first scenario, the doll interacts with the child through an audio interactive feedback based on his/her emotional state. The analysis and the recording of the face and of the emotion state can be performed for up to 13 hours in an always-on mode with a 3.7V 4000 mAh battery. This duration can be further extended using different techniques, such as auto-off modes, wake-up on IMU activity, etc.

According to the second scenario, nViso undertook testing with the demonstrator by conducting a pilot which included 12 experiences with 12 different children in a controlled environment. During those experiences the doll was tested in play-mode. A room was specifically prepared for the tests and equipped with: Smart Doll on a toy high chair; a children’s chair facing the doll within 1-meter distance; a camera behind the doll, facing the child once sitting on the chair; a chair for the facilitator and a desk for the researchers.

Children were asked to sit in front of the talking doll for about 10 minutes. Each child was in the room with a coordinator and 1 or 2 researchers, and they were informed that they would be asked some questions. While the coordinator asked some generic question to the child, the doll greeted the child and introduced herself by surprise.

This pilot was designed to test the following functionalities:

- Robustness of the system.

- Ability of the doll to detect faces and process emotions while interacting with a child.

- Accuracy of the inferred emotions by comparing them to the researchers’ observations taken during the experience and fine-tuned by watching the video of the experiences offline.

The robustness of the system and its functionalities were verified and the results of the tests show stability.

The results are derived by comparing the emotion profiles recorded by the EoT board powered by the emotion engine to the annotations taken by the researchers during the test experiences.  The accuracy of the doll to detect and process emotions while interacting with a child is 84.7%.

The accuracy of the doll to detect and process emotions while interacting with a child is 84.7%.

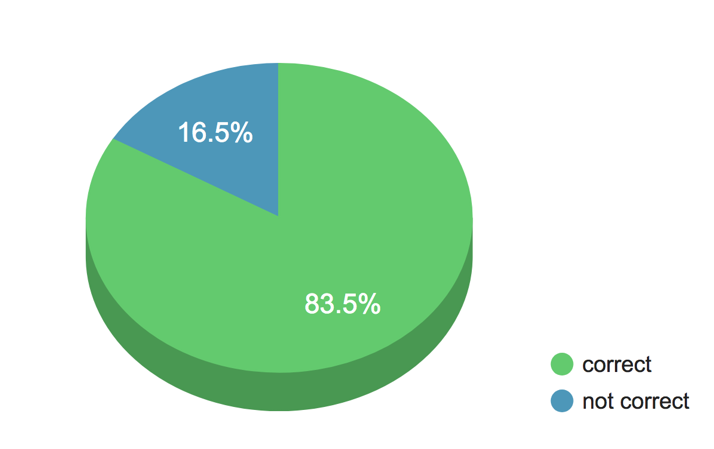

The accuracy of the emotion detected compared to the notes of the researches is 83.5%

Smart Doll – Concept http://eyesofthings.eu/?page_id=1888

Smart Doll – Development http://eyesofthings.eu/?page_id=1884